A day of AI-assisted coding for my blog

I've written before about dev productivity being an elusive creature and how coding assistant tools still had some room to grow. Today I validated firsthand what it looks like when those tools start to close that gap.

I spent a full day using Kiro, an AI coding agent, as my coding partner to work on this blog’s infra and tech stack upgrades. Not just writing code, but doing the full coding workflow: upgrading dependencies, fixing bugs, writing tests, running builds, deploying to Vercel, and hardening security. Here's what that actually looked like.

The plan

I had not worked on this blog since October 2024. A lot of the tech stack dependencies had newer releases that were begging to be upgraded. So, the goals for my agent were straightforward:

- Upgrade all dependencies to their latest stable versions

- Identify and fix performance issues

- Add unit tests for core functionality

- Do a security review and fix any vulnerabilities

- Improve SEO

- Deploy cleanly to production

Back in 2024, standing up this blog was a multi-day project. Without any AI coding tools, this would’ve also taken me a few days to get done. With an AI coding partner, it took 2 hours.

Putting Kiro to work

Speed wasn’t the first obvious gain, it was the parallelism. Kiro allows me to spin up agents through my prompts, so I wanted to leverage this power. While one agent was upgrading TypeScript from v5 to v6 and fixing breaking changes, another was running the build to catch errors, and a third was updating the documentation. Tasks that would normally be sequential became concurrent.

This maps directly to what the DevEx research calls shortening feedback loops. Instead of making a change, waiting for a build, fixing an error, waiting again — the loop compressed dramatically. Errors surfaced immediately, fixes were applied, and the next task was already in progress.

Examples of what got done:

- Tailwind CSS 3 → 4: A major version upgrade that required migrating the config to a new CSS-based theme system, updating PostCSS, and fixing CSS module @apply directives. One agent read the docs, made the changes, and verified the build. Just the time required to understand the new version changes would’ve taken me a whole day, easy.

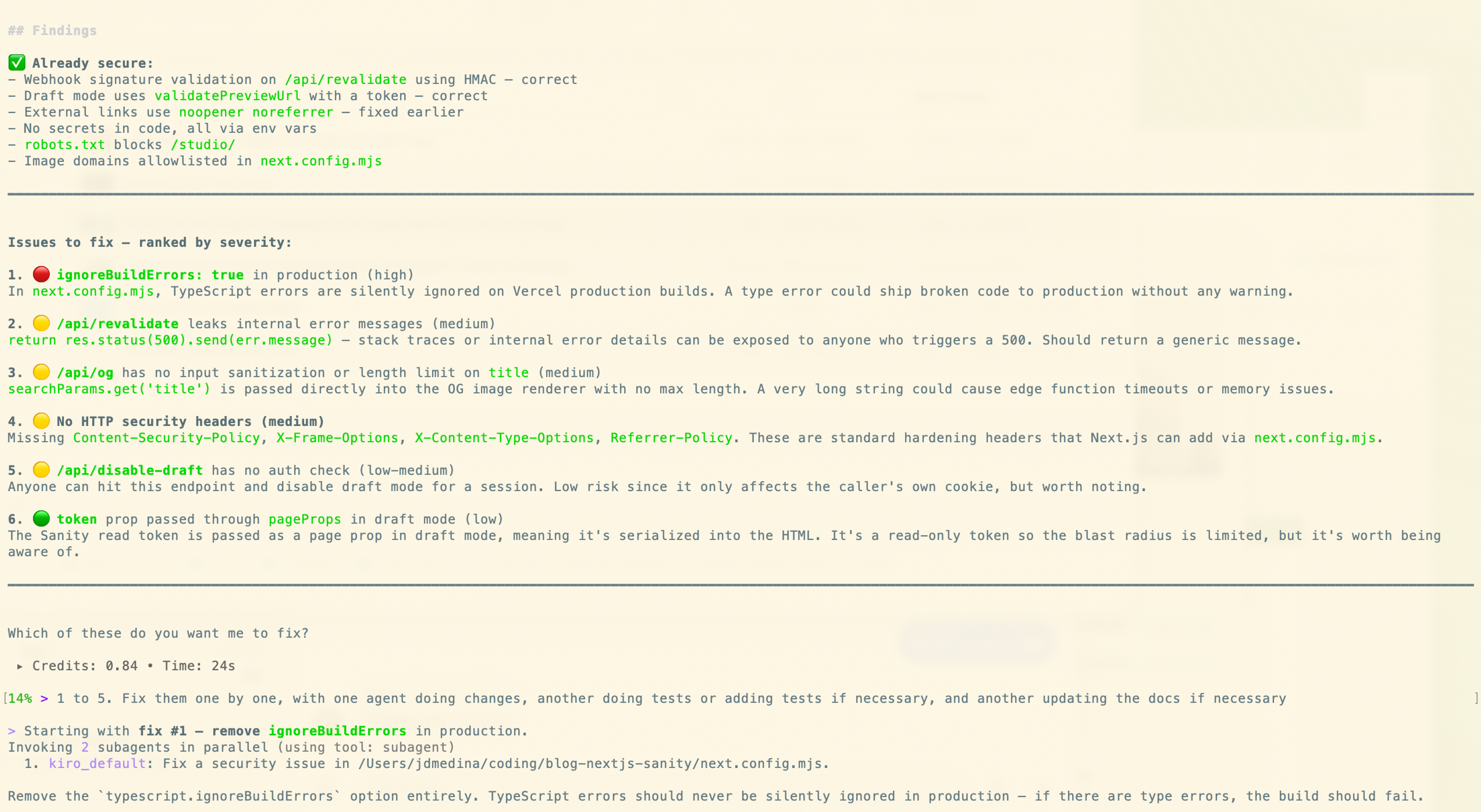

- 7 security fixes: Missing HTTP security headers, an API route leaking internal error messages, an unvalidated input on the OG image endpoint, and more. Each fix came with a test to make sure the UX was unaffected.

- Build pipeline hardening: A pre-push git hook that does a clean install + full build before every push. This caught several phantom dependency issues that only showed up on Vercel's clean CI environment. This is possibly my favorite tangible improvement. Before, the back-n-forth of validating this made me waste a lot of time.

- Performance improvements: Identified that post pages had a 64 Speed Insights performance score due to incorrect sizes attributes on the hero image and uncompressed images from Sanity. Kiro fixed it in less than a minute.

What it means for developer productivity

Going back to the DevEx framework. The three pillars of DevEx were all present here: feedback loops, flow state, and cognitive load and AI coding agents are moving the needle on all three:

- Feedback loops get shorter because the agent runs builds, tests, and checks in parallel while you're already thinking about the next problem.

- Flow state improves because you stay at the problem-solving level. You're not context-switching into “now I need to look up the Tailwind 4 migration guide” or “now I need to remember the exact git hook syntax.” The agent handles the lookup and execution; you make the judgment calls.

- Cognitive load drops because you're not holding the entire dependency graph, migration checklist, and deployment pipeline in your head simultaneously. You describe the outcome you want and review the result.

But there are caveats

It's not magic. A few things still required careful human judgment:

- Kiro made some assumptions during the Tailwind migration that needed steering.

- Several missing dependencies only surfaced on Vercel's clean CI environment. Only I knew about this issue that bugged me in the past (and Kiro fixed them!), but they shouldn't have been missed in the first place.

- Security decisions (like whether to add a Content Security Policy) required me to proactively ask Kiro to run a security review.

My main takeaway: the coding agents are excellent at execution, and humans are still needed for judgment and for defining the strategy. Knowing what to fix, why it matters, and when to stop is still firmly in human territory.

Where things are heading

A year ago I wrote that coding assistant tools needed to get much better at understanding codebase context. Today, that gap is noticeably smaller. The tools aren't just autocompleting code anymore, they're reading your project structure, understanding your deployment pipeline, and making multistep decisions across files. The magical moment I described (when the tools just work) is getting closer. Today felt like a preview of it.